Ever since I had learned about them, I've been fascinated by raytracers. I downloaded a copy of POV-Ray back in around 2000, installed it on my Celeron 333MHz, and put together various scenes of objects with various properties, and would watch intently as they rendered very slowly.

The concept of a computer generating photorealistic images was completely fascinating to me, and I read through a good amount of the documentation on how it worked.

I had wanted to write my own for the longest time, but never knew enough of the actual math involved.

After spending lots of time building random objects in Second Life, getting a feel for some 3D maths, I decided to look up some guides on vector math, and worked out some of the equations necessary for raytracing, such as how to determine where a line intersects a plane, etc.

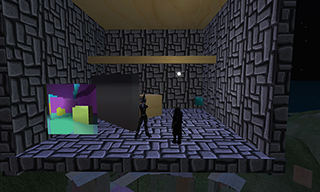

The hardest part about building the raytracer in Second Life, however, was the limited amount of data you could collect about the 3D world from a script. You could get an object's position, rotation, and size, but no data about shape. Initially for the raytracer, I assumed all objects were cubes.

(Click on any image to see a larger version)

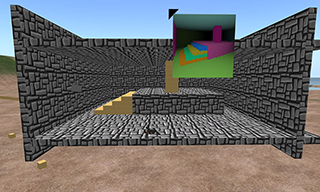

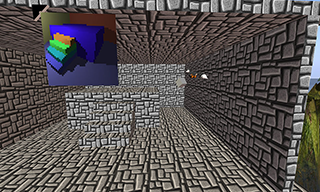

You can also see that initally I was using the Prim Printer to draw the raytraced images.

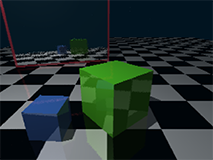

Once I had the script convert object data into polygons, and had equations required for raytracing, I could begin rendering images.

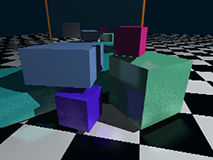

The raytracer was very slow, due in part to the low speed scripts are run at in Second Life. I decided to accelerate it by parallelizing the script, splitting the rendering task to multiple scripts in a single object.

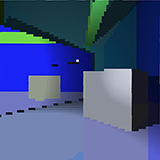

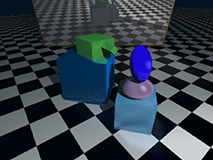

Once I had the base script, I began adding features. First I added lighting with phong. Next came shadowing. Like most features to add into a raytracer, it was surprisingly easy. Each time a ray from the camera hits the scene, it then draws a ray toward the light source to see if anything's blocking the light source.

Next came reflections. Since I already had code for drawing a ray and seeing where in a scene it collided with, adding shadows/reflections was surprisingly easy to do. I simply bounced the ray off the surface of and then drew another ray, and averaged the results based on the reflectivity of the first object.

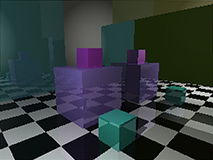

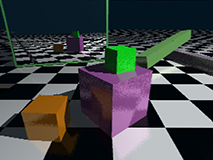

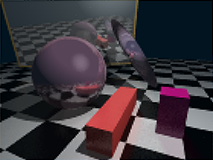

Once I had reflections, I began experimenting with diffused reflections. I remembered from when I first read about raytracers that diffused reflections were due to imperfections along the surface of an object, causing light to scatter in all directions. This is why, on some shiny objects, the reflected image is very blurry. I implemented my diffused reflections by having light, when it reflects, jitter off in a random direction. Since this would make many objects look very rough, I smoothed out the image by adding full-screen anti-aliasing.

This slowed down the computations some, but it results in soft reflections.

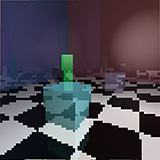

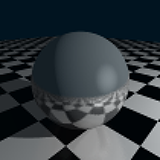

Finally, wanting to have a ray tracer that could render the stereotypical "chrome sphere on checkerboard" image, I worked out the math for sphere/spheroid intersections.

Unfortunately, after adding all these various features, I began to start getting out-of-memory errors when I'd run this script in the LSL interpreter. However, with the Mono VM deployed now, scripts now have more memory and run faster, so the raytracer no longer crashes randomly when a scene gets too complex.

All of these scenes were built by using Second Life's provided building tools, then pointing the raytracer at them. No prims were harmed in the making of these images.

Download: raytracer-controller.lsl.txt

Download: raytracer.lsl.txt

Note: Scripts are provided for reference only, and depend on a screen not provided.

Go back to the main page.

Site created by Hadley.